At HackerOne, we understand the challenge of maintaining robust security in your codebase. That’s why our PullRequest product incorporates a groundbreaking feature: Smart Review Selection. A cornerstone of this feature that we are going to be diving into in this post is our Security Hotspot AI model, a tool designed to change the way we approach secure code reviews by focusing on risk areas and potential security vulnerabilities.

Traditional methods of identifying security vulnerabilities involve noisy hard-coded checks, yet they often fall short due to the sheer volume and complexity of modern codebases. To address this, we’ve developed an innovative approach that combines AI and human expertise to enhance the efficiency and effectiveness of security reviews.

Security Hotspots

A Security Hotspot is a section of code that is more likely to contain security risks. This is not a new concept that we are creating, but expert security engineers do this today naturally and identify the areas of their application that are most likely to have vulnerabilities introduced and focus on those areas. This concept is pivotal in our Smart Review Selection process. Instead of asking:

Does this code contain any security vulnerabilities?

We train our models to answer a more nuanced question:

Has code similar to this produced security issues in the past?

This rephrasing of the problem allows us to apply machine learning more effectively, creating a powerful tool to aid our human reviewers. This is counter to the approach of other purely-software competitors who don’t have the advantage of using a human-in-the-loop approach.

The Inner Workings of Our AI Model

Our Security Hotspot detection process begins by filtering out less relevant sections of code, such as unit tests or code not typically run in production. We then apply a series of neural networks to both the metadata about the code and the code itself. This allows us to identify files and sections within files that present a higher security risk.

Training with Real Data

The backbone of our AI models is a custom curated dataset composed of millions of code review comments spanning many programming languages. We are able to leverage this dataset to isolate comments that are highly correlated with security issues and use them to set the goals for our AI models. By analyzing these comments and the associated code, we’ve trained our transformer-based neural network models to recognize patterns and indicators of high-risk code. This is akin to how a seasoned security engineer would use their extensive experience to notice subtle nuances and patterns that could indicate a potential security issue.

Beyond Basic Detection: Unveiling the Nuanced Nature of Security Hotspots

Our approach goes beyond simply flagging obvious threats. It extends to recognizing code that might appear safe but harbors a higher risk due to subtle nuances. This dual capability is crucial for two reasons: it not only helps to identify clear-cut vulnerabilities but also highlights areas where extra caution is warranted, even if no immediate threat is evident. Many more classic static analysis approaches require full syntax tree resolution in order to detect vulnerabilities and can easily break down as soon as they see unfamiliar syntax or frameworks being used.

Example: Detecting SQL Injection Vulnerabilities

Consider a scenario where a piece of code is handling user input for database queries.

user_input = request.args.get('query')

query = "SELECT * FROM products WHERE name = '" + user_input + "'"

conn = sqlite3.connect('database.db')

cursor = conn.cursor()

cursor.execute(query)

Traditional security static analysis checks might just look for regex patterns or require parsing the abstract syntax tree. For the above example, they might look for the + operator being used to concatenate strings, especially if passed to something called cursor.execute.

These checks can be powerful for very common and standard language/framework usage, however they often break down with newer or custom patterns commonly seen in enterprise software.

def add_in_clause(query, field, values):

if not values:

return query

in_clause = f"{field} IN ({', '.join(['\'' + v + '\'' for v in values])})"

return query + " AND " + in_clause if "WHERE" in query else query + " WHERE " + in_clause

query = add_in_clause(existing_query, "category", user_input)

# Execute the query using a database connection (not shown)

The code here is slightly more complex and could thus be difficult to detect with traditional static analysis checks. Perhaps the code is all not in one file, or the add_in_clause function is in a different file than the code that calls it. However, our AI model, similar to an expert human senior secuirty engineer, is able to synthesize overall patterns and determine if this looks like something that could warrant security review, thereby catching less obvious but potentially dangerous practices that could lead to SQL injection vulnerabilities.

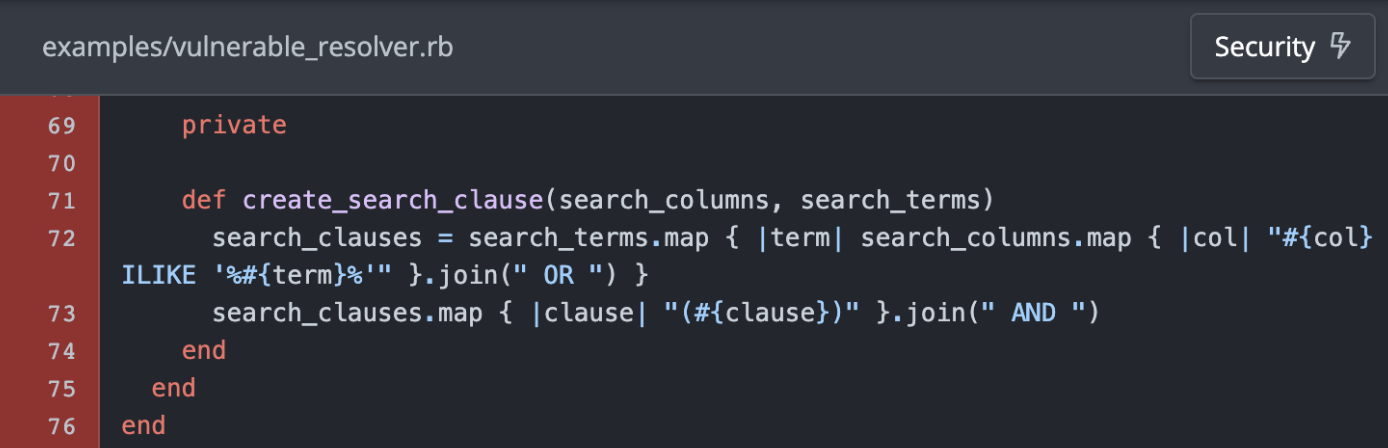

Case Study: Identifying Security Hotspots for Real Vulnerability

HackerOne found a SQL injection in the past year that was disclosed publicly here. We retroactively ran the code through our security hotspot detection model and it was able to identify the code as a security hotspot. Shown here is an example of the code that was flagged by our model (illustrated by the red highlighting):

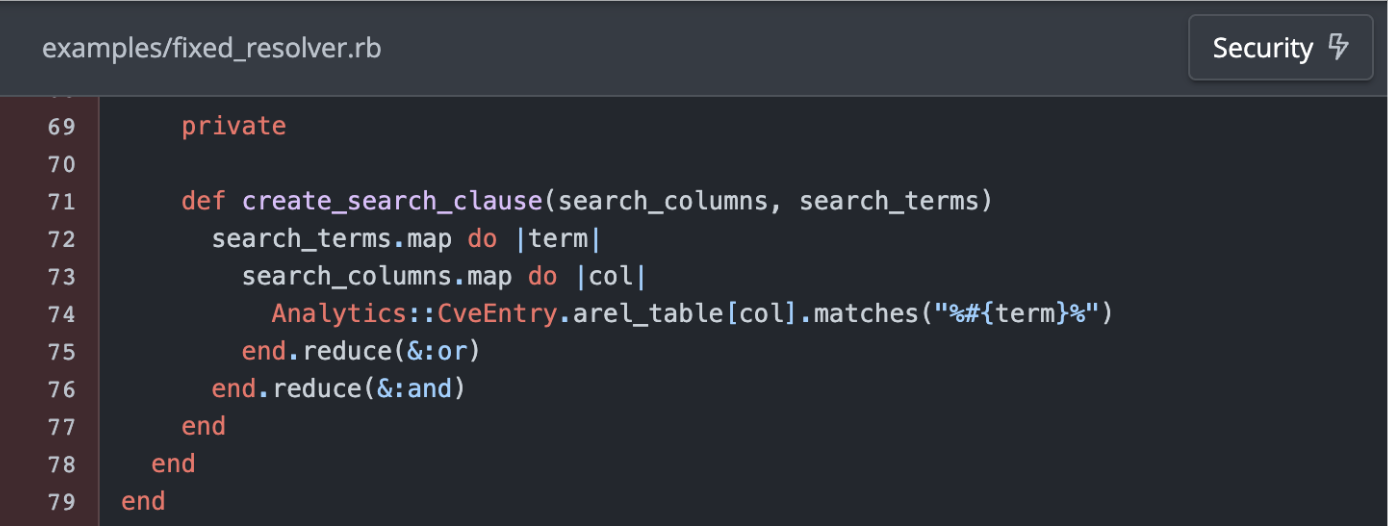

Now, let’s take a look at the same code after the fix.

You will notice that there is a very faint red highlighting in the same area as before. This is because our model is able to identify that the code is still a potential security hotspot that could warrant careful review to ensure that the search clause is used safely. However, the more obvious SQL injection vulnerability has been fixed and therefore is not flagged as high of a risk as before.

Integrating Security Hotspot Detection in Your Workflow

Incorporating Security Hotspot detection into your code review process is seamless. When a pull request is made, our Smart Review Selection feature kicks in, scanning the code for potential hotspots. This pre-review process helps in prioritizing the most critical areas for review, ensuring that human time is spent where it matters most. If a review is deemed necessary, then the code is automatically routed to our security engineers for a thorough check. Our security engineers are then shown the exact risk areas and outputs identified by the AI model, and our extensive SAST tooling and partnerships, allowing the reviewers to focus their attention on the most critical sections of code.

The Role of Human Expertise

While our AI model provides invaluable insights, the final decision-making power rests with human reviewers. The model serves as a guide, directing attention to areas with a higher probability of security issues, but it is the expertise of our security engineers that truly drives the review process. This combination of AI and human intelligence ensures a balanced and thorough review, maximizing the efficiency and effectiveness of our security checks. We believe that this is where the future of code review is available today and that pure AI-based approaches are still not ready for prime time.

Continuous Improvement and Adaptation

One of the key strengths of our AI-driven Security Hotspot model is its ability to learn and adapt. As new security threats emerge and coding practices evolve, we will continue updating and improving our model’s capability, ensuring it remains effective in identifying the latest security risks. This dynamic nature of the AI model is crucial in keeping up with the fast-paced changes in software development and security landscapes.

The Bigger Picture: Comprehensive Security Review

While our Security Hotspot detection is a vital component, it’s just one part of our broader Smart Review Selection strategy. We also incorporate other signals like static code analysis and historical code metadata. We also perform random sampling on top of these signals as a mechanism to insert and scale human intelligence into the process. This multi-faceted approach ensures a well-rounded review process, covering all bases from static vulnerabilities to potential runtime issues.

Conclusion: A Step Towards Smarter Security

In conclusion, we believe that the integration of AI in identifying security hotspots represents a significant leap forward in the realm of secure code review. By combining the nuanced detection capabilities of our AI models with the expertise of human reviewers, we offer a powerful tool that enhances security without impeding the development process. As we continue to refine and improve our models, we remain committed to helping our clients navigate the complex landscape of software security with greater ease and efficiency.

Stay tuned for further updates and insights into our evolving code review technologies. We’re excited to be at the forefront of this journey, harnessing the power of AI to make software development safer and more secure.